For some years now, I’ve been a big fan of RoadKil’s Unstoppable Copier utility which is an easy to use tool to copy or move files from any drive to another drive. We often have to move large amounts of data from old hard drives to new hard drives either because a client is upgrading to a new computer/OS or because a hard drive is failing.

For years, we preferred to use Xcopy from the command prompt over dragging and dropping files in Windows Explorer because in our opinion Xcopy seemed to be faster when copying large numbers of files. However, increasingly Xcopy was becoming unusable because when a path+filename exceeds 256 characters Xcopy dies. It doesn’t skip the file, it doesn’t continue, it just stops cold. This usually occurred in the Temporary Internet files folders which meant we had to do clean up on the old drive prior to executing the copy procedure. In the case of a failing drive, this might be impossible.

After a little bit of research, we discovered Unstoppable Copier which solved a lot of problems for us. First, the program was designed as a data recovery tool (in fact if you visit their website, you will find the download page under Data Recovery). As such, it has the ability to retry the copying of a file a number of times and if it continues to fail to copy a file, the program will log the damaged file and then skip the damaged file and move on. What this does is great because now we can start a large copy process at the end of the day and we know that it will be finished by the time we come in the next morning. No more crashed Xcopy transfers!

But recently, I ran into a situation where I needed to move millions of individual files totaling some 650Gb of data to a large 2TB hard drive to gain some additional space on a server. The problem was that the server and drives HAD to remain in operation because they were used in timed processes that could not be terminated for more than a couple of hours per day. Every tool I had in my arsenal (Acronis, Ghost, CloneZilla, Double-Image, etc.) needed more than a couple of hours to move that volume of data. Since both the old drives and the new drives could be in operation at the same time and because the data was well organized in specific folders that could be moved independently, I made the decision to use Unstoppable Copier to move individual file folders (at the root level) and update any individual applications to point to the new drive locations after the move.

The first folder I attempted to move had 400,000 individual files but only about 5Gb of data. It became clear after the first 50,000 files and several hours of processing time that to continue down this path was going to be a seriously time consuming process. Because Unstoppable Copier copies files one at a time and we were transferring across a network from a NAS RAID device to a local mirrored RAID, as the number of individual files went up the time increased significantly. To completely move 650Gb of data was going to take a couple of weeks of continuous file transfers and this was not possible in this environment.

I want to make it understood that this review is not a criticism of Unstoppable Copier and Unstoppable Copier is not going to be dropped from my toolkit! Unstoppable Copier was designed primarily to recover data NOT to provide highly efficient file copy functions. So, in this case, it was clear that Unstoppable Copier was simply the wrong tool for this particular job, not because of any inherent failure on the part of Unstoppable Copier, but because it was designed to do a slightly different job. It was kind of like using a monkey wrench to drive in a nail. I could accomplish my goal eventually, but not without a lot of work.

Having used FTP transfer software for years and being a FileZilla fan for many of those years, one of the features that I like most about Filezilla is its ability to transfer multiple files at the same time. This means that if you have a large file that will take a while to download and a bunch of smaller files to download as well, Filezilla can continue to download the smaller files at the same time to keep the data flowing efficiently.This multi-threaded approach improves the speed of downloads significantly.

It’s kind of like being at Walmart when there is only one line open for checking out. One person goes through the line at a time, but the line backs up because more than one person at a time joins the line. When the manager opens a second line, the checkout process goes twice as fast. Open five checkout lines and the checkout process goes five times as fast. You get the point.

So I fired up my trusty Google search and found several multi-threaded file copy utilities, but one caught my eye: RichCopy. Apparently, RichCopy was developed internally at Microsoft to improve the speed of file copying in test environments at Microsoft. It was kept in-house for several years and then around 2008-2009, it began to leak out on some file-sharing networks. Finally, Microsoft just released it to the public and it can be downloaded here.

So I fired up my trusty Google search and found several multi-threaded file copy utilities, but one caught my eye: RichCopy. Apparently, RichCopy was developed internally at Microsoft to improve the speed of file copying in test environments at Microsoft. It was kept in-house for several years and then around 2008-2009, it began to leak out on some file-sharing networks. Finally, Microsoft just released it to the public and it can be downloaded here.

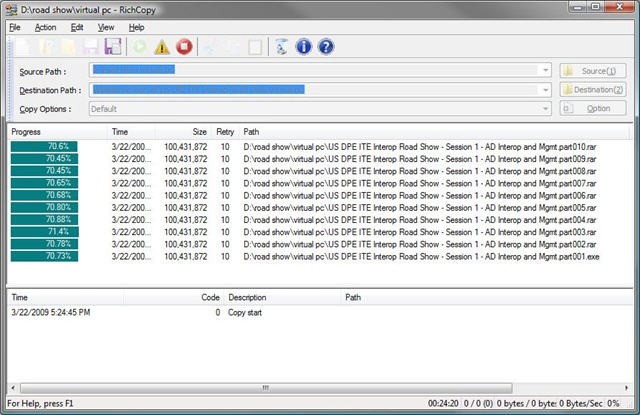

I initially noticed RichCopy because it bore a certain resemblance to Unstoppable Copier and because it looked easy to use. I was surprised after installing it to find it to be as feature rich as you could hope and many of the features are even beyond what my needs are right now, so the program will easily grow with me as my needs change in the future.

The first test of RichCopy was on a folder with about 8Gb of data and over 500,000 individual files. Unstoppable Copier projected a 12-24hours time frame to complete the copy process, but RichCopy finished in under 6hrs. I discovered after the fact that RichCopy was designed to work across networks and in benchmark tests, RichCopy beat fourteen other multi-threaded file copy utilities in network copies. Since that was exactly what I was trying to do, I knew that I now had the exactly the right tool for what I was trying to do.

The first test of RichCopy was on a folder with about 8Gb of data and over 500,000 individual files. Unstoppable Copier projected a 12-24hours time frame to complete the copy process, but RichCopy finished in under 6hrs. I discovered after the fact that RichCopy was designed to work across networks and in benchmark tests, RichCopy beat fourteen other multi-threaded file copy utilities in network copies. Since that was exactly what I was trying to do, I knew that I now had the exactly the right tool for what I was trying to do.

Another folder copy, I performed was over 50Gb with over 130,000 individual files. I set the options for RichCopy to move and verify each move and started the file copy. With files averaging 3Mb each, RichCopy moved 35,000 files in a little over 1.5hrs across a 100Mbit network. These 35,000 represented about 45% of the total data (over 20Gb).

UPDATE: In a subsequent test using 50 threads, I discovered that RichCopy doesn’t check the files it is trying to move against the internal database of active threads, so what you get is a series of errors indicating that a file is locked by another process. Additionally, with the higher thread count, I saw a about a 15-20% decrease in the bytes per second being transferred, so throughput suffers slightly. However, I also performed a couple of tests with the file verify turned on and what I found was verify slows individual threads down proportionally to the size of the file. The bigger the file, the longer the time to verify, longer it takes to complete the transfer. This result was actually expected by me as I knew that verify would take longer, but it is interesting to actually watch it happen in real-time. My personal recommendation would be to keep your thread count between 15 and 20 to get the best results. I may try another test using only 5 threads to see if that works any better. Will report back.

RichCopy isn’t perfect. You can tell that it was developed for in-house use because some of the help screens haven’t been proof-read and there are typos. And it takes a little bit of practice to find the sweet spot when it comes to the number of file transfers you can run at the same time. Theoretically you can set up 256 threads to run at the same time and while I might test this in the future, 20 is as high as I’ve gone so far and I’m guessing right now for my purposes 15 might be the right number. I haven’t been able to locate any recommendations from anyone on the web about this setting, so if I come up with the perfect number, I’ll update this post accordingly. One negative I’ve noticed is that when you set the transfer mode to “Move”, the files are moved the appropriate folders on the destination drive, but the empty folders are left on the source drive. I don’t know if this has something to do with the multi-thread function or just an oversight on the developer’s part, but it means that I have to do about 5 or 10 minutes worth of clean up after each move. Not a big deal, but you assume that Move means Move and folders would be removed from the source drive after the move is complete.

The bottom line is that if the number of files is huge, the sizes of the files vary greatly and you are copying across a network, RichCopy’s multi-threaded copying is the only way to go. The benchmarks indicate that in a local drive to drive copy process, RichCopy is twice as fast as Unstoppable Copier. In large file copies, Unstoppable Copier is about 2.5 times faster than RichCopy. And, in network copies, RichCopy is about 3 times faster than Unstoppable Copier. My experience so far is matching up well to the benchmarks, so I can positively confirm that in the right situation RichCopy is an excellent solution. As I said before though, Unstoppable Copier will not be leaving my toolkit because while both tools perform basically the same task, one tool can shine over the other in different situations.

Here are my recommendations:

- Unstoppable Copier: Set it and forget it operation; large files; drive to drive; speed not critical

- RichCopy: Network transfers, wild variations in the file sizes with mostly smaller files and few large files; drive to drive; transfer time limited

Give both a try and see if you prefer one tool over the other. I’d be interested in reading your opinions in the comments below.

…if everybody just used “Thunderbolt”!!!

LOL!

http://www.apple.com/thunderbolt/

On the flipside, I was at work and saw some bellsouth guys installing and splicing some fiber. On a whim, I asked one of the more “experienced” technicians, as I perceived him to be, if it was possible to combine and send more than one color/wavelength of laser over one single strand of fiber optic cable and split it out at the other end and he said “SURE!”…you know what this means for data communication??? Unlimited data bandwidth possibility!

Joel:

It’s already possible and used 🙂

http://en.wikipedia.org/wiki/Wavelength-division_multiplexing

You’re talking data speeds in the terabits *ahem* which will be amazing *drools*

To the subject – Richcopy is simply *brilliant*. I’m currently, however using robocopy /MT:15 (MT being MultiThead :15 being the threads) to back some stuff up to a USB drive on a customer server and reaching 78 megabytes (not megabits) per second.

Good Day! I hope you’re enjoying a pleasant one. Thank You for this informative, helpful article. I’ve read dozens of articles so far, and have evaluated many file copy utility comparisons. My evaluation of file copy utility comparison articles then, is that this is the “Best in Class”.

I found with most other articles, a profound feeling that I wanted to escape or fall asleep. Your article was a nice read, so much so, I read it a second time to enjoy it twice. Along with the concise, informative manner in which you present the material, you make it easy for readers to understand, especially with your humorous references to monkey wrenches and walmart. As funny as they are, they are also real-world scenarios that others can comprehend. I also like the way you integrate your professional real-world experiences with the utilities your evaluating, and the honorable way you present both. You’re knowledgeable non-geek approach and presentation are a refreshing smile producing experience.

My research now ended, I’ll be downloading Unstoppable and RichCopy momentarily, right after I find a fast downloading utility… kiddin’!

Enjoy!

John B.

An excellent and very readable article, many thanks.

Richcopy is definitely an almost Number 1, multi threading is a huge plus.

BUT!!! It does not copy folders only individual files in a folder, so the destination folder had to be manually created which is a huge pain. I could not find a way around it.

Regards,

PL

Hi Peter. You only need to create a root target folder. Richcopy will copy over the source folder hierarchal directory structure and place the files in accordance with the source location co-ordinates. Make sure the advanced option settings are properly configured.

Try Teracopy, it was worked well for my folder and file copies. I also used Richcopy but noticed that it would miss some files or skip them.

Unfortunately, when it comes to really large number of files (in my case, 2mil+, spread accross 800GB) TeraCopy would go VERY slow during initial file count and ultimately hanged up.

Pingback: Como copiar grandes cantidades de archivos de un disco a otro

Indeed this would work, but as peter said it lacks the ability to copy whole folders. There is only one software and know about and which is quite new in the market that would do that and it’s called LongPathTool. Now I ain’t being pompous when I say that but it is what it is. Long Path Tool does what it promises about; all I know is that it’s a trustworthy application.

You can try another free portable file copier software Exshail CopyCare from

below site. Main feature is Preview list of files before copying with seven

options below.

1. “Source > Target – Copy Files New and changed from Source”

2. “Source > Target – Copy Files New From Source”

3. “Source > Target – Copy Files Changed from Source”

4. “Target > Source – Copy Files Changed from Target”

5. “Target Source Copy Files having Size Difference”

6. “Delete Files Orphan from Target”

7. “Source = Target – Copy Exact to Target – Overwrite All (Delete Orphans

from Target)”

https://sites.google.com/site/exshail/exshailcopycare

I tried both.

Copying of large files over Gb network to a NAS with 4 sata disk RAID 5.

Both applications were significantly slower than Windows copy with Rich copy being the slowest. I tried adjusting the amount of threads to no avail.

I got a solid 75MB/s using windows copy, 29MB/s using unstoppable and 10-15MB/s with peaks of 25MB/s using rich copy.

So the features and configuration of the 2 applications is far better than standard windows copy but at a significant penalty of speed.

It might not seem like much but when you are dealing with multiple TB of data copy times can vary dramatically.

As for the original issue of live data and too many files, you could have also used sector level backup software like shadowprotect. It works on and offline and doesn’t get slowed down by lots of small files. You could then just mount the image file or restore image file to external drive, or restore into a VHD/x container. It is limited in that it can only backup and restore to whole logical volumes and not file level but is a very handy bit of software.

Make sure you change the /FC switch to 4096 on RichCopy to get the fastest throughput.

We have a NAS box with four bonded 1Gbps connections using 802.33ad. We copy very large VMWare backups from that NAS to a Windows 2008 Server with an external RDX drive using USB 3.0 interface. The server uses two bonded 1Gbps network connections as well. I noticed that Windows Explorer out performed RichCopy or any other utility – until I changed the File Cache setting from the default to 4096 – now they act the same. I am getting roughly 95-110Megabytes per second (bytes not bits).

Michael, that sounds great. Can you please share what your other settings are? directory, file, and search thread counts.

Hi,

Thanks for this interesting reading. I thought I could almost find what I was looking for…

Maybe I did in fact.

Are any of those copiers able to copy huge files on multiple disks?

let me explain: I have a lots of files on a NAS that I want to backup on seperate disk of diferent size. And I want to be able to plug a disk and read the files directly. like we use to do it with Floppy 🙂

When the first disk is full, “Please insert next disk”. At best it could optimize disk space by selectig files that would fill up each disk…

Would they do the tricks?

I was facing problems copying files with long filenames, so I used Long Path Tool to resolve it as suggested.

“Long Path Tool” is very helpful for this error !

best solution for your problem.

I have always been a patron of RichCopy ever since its inception. Although I tried other softwares and considered switching to them, RichCopy really caters to my needs and it has several tools that are very valuable to me and our company. Keep it up!

Long path tool is the very good program for error, unlock solution.

Try it and solve your problem.

I used long path tool and I solve my error, unlock problem solution.

I might provide something here which is a bit more helpful than RichCopy from my own experience. I would recommend you to use GS RichCopy 360. I found this way better than in every aspect than RichCopy. The additional features are pretty amazing as well. You must give it a try. Hope this will help.

Pingback: Recuperacion de Datos Como copiar grandes cantidades de archivos de un disco a otro

Hello people you can try this software htt://descargarpaquetecopies.cubava.cu

I don’t normally reply to my own posts, but I feel this is time I need to. I was kind enough to make the first post about GS RichCopy 360 public but since that time we have been been getting one or two posts a week about this product. The posts are similar in style and from similar IP addresses and if I didn’t know better, I’d say someone is trying to spam my site via the comments to try and sell this product. I offer reviews of products and I expect some comments about competing products, but the people supporting GS RichCopy 360 are going overboard and I think anyone who reads this article and the public comments should also be aware that it appears that someone is trying to post multiple comments supporting this product from what I believe are spoofed email accounts. All I have to say is the spammers have now been warned and are put on notice that I’m blocking their comments and if their behavior continues, I will be blocking IP addresses next. Do not purchase GS RichCopy 360 even if it is a good and safe product. I do not support businesses that use spam marketing techniques and I suggest my readers avoid them as well.

good article

I use this tool. This tool is very helpful for me.

use robocopy

When I 1st got this program (RichCopy – but actually the program name of download is “Hoffman Utility Spotlight” & has the 2 whitegirls – business lookin ones at that – on the program opening screen) I thought this was the answer to all copying problems…I may have been wrong…I say may as the whole issue is still up in the air. 1st off when downloading the file I got 2 versions of it whose bytes were slightly different (Virus? Or some other odd reason when I got it from Microsoft). The options on it are fantastic as many other copy programs do not spell out (without actually having to make test copies) as to what the program is actually going to do (eg. leave source alone, delete, etc). Anyway things were going great but after a little bit problems began – this is a constant theme with file/folder copy programs especially when it comes to integrating with the context menu (once its there/works, then poof its gone) as I think that was one of possibly about 2 issues (other was 256 file name limit – retarded!) I had with it. After a few more problems I quit on it as it is mandatory for a copier program to be “dead nuts” unlike many other programs that can be “a little off” and still get away with it. So that is where I left the matter as I did not bother with it any more. As the author of this article said that Unstoppable Copier is a good albeit slower copier but is excellent at what it is supposed to do but I found that it was not a suitable solution for every day copying. Some other notes: Teracopy would be a good solution but it is not only unstable in its context menu as mentioned above but it also does not copy files with 256 character filenames (due to this I have not used Teracopy for a long time & dont know if they fixed this retarded aspect of their program). I tried 6 to 12 or more copiers all with the same issues or features that are unacceptable or not enough – one caveat is that I am using a Windows 7 setup without the second service pack as Microsofts Big Brother and/or Big Sister act (well known fact Microsoft is trying to force its “telemetry” of are data down our throats! Where the hell is Judge Judy on this one chewin Microsofts rear end out?) is getting to be too much as Microsoft, and all other organizatios for that matter, are given carte blach status to be intruding on peoples personal computer a practice that should be strictly prohibited as think about it, if you or I went over to an organizations & demanded to look into their servers we/I would hear the word “security” as we/I would be tossed out of the freagin building. Hey people…world…where the freagin frick is your sanity, logic & reasoning, & quest to remain free another day! Mrs Torrence to Danny: wake up! Anyhow, the file copier field of software (free that is) has very few acceptable options (although its been a long time since I have checked only newly available programs) where some other program areas are “loaded to the hilt” in competition (eg. partiioning software as it is difficult to choose one as there are so many good options! So, in my experience, I have concluded that FastCopy is by far the best copy software as it RARELY ever fails to tackle a job, it goes over 256 file name length, it has about about all the features one needs plus a few perks (eg. file wipe on copy), has not only the context menu but an interface too, allows for pasting with its paste or using Microsofts paste (this looks like no advantage but is very handy when one wants to know what is going on in a copy as it alerts as to what is going to happen – it is annoying when used as an all the time copier but a great feature when needed in certain key situations), its context menu & settings are spot on, and it is a very stable program. I am not certain about how FastCopy handles the “multiple thread” aspect but I know that it for SURE tackles the task of copying files better than anything I have tested & it has about all the features one needs in a copier and it is the most stable hands down. It is also fast. So my recommendation is FastCopy all the way, as it is the only stable copier that I know.